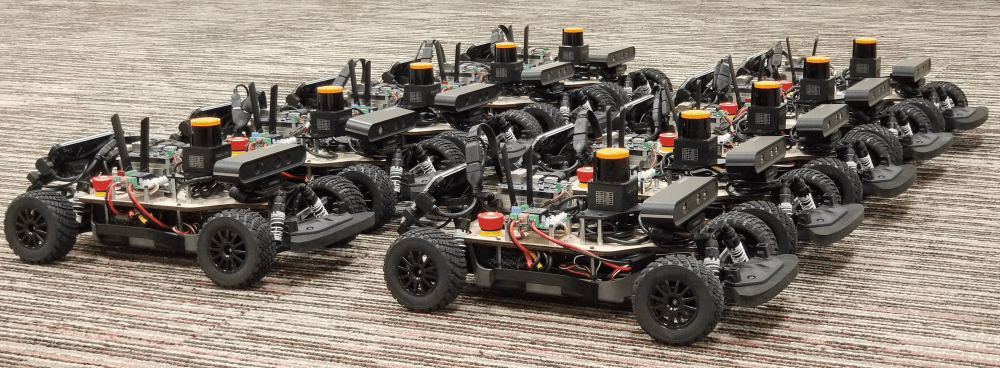

In fall of 2020, I took a computer science course called Autonomous Robots. It focuses on algorithms for mobile robots to enable self-driving capabilities, as well as complementary functions like localization and mapping. We were given a 1/10th scale racecar as our testing platform.

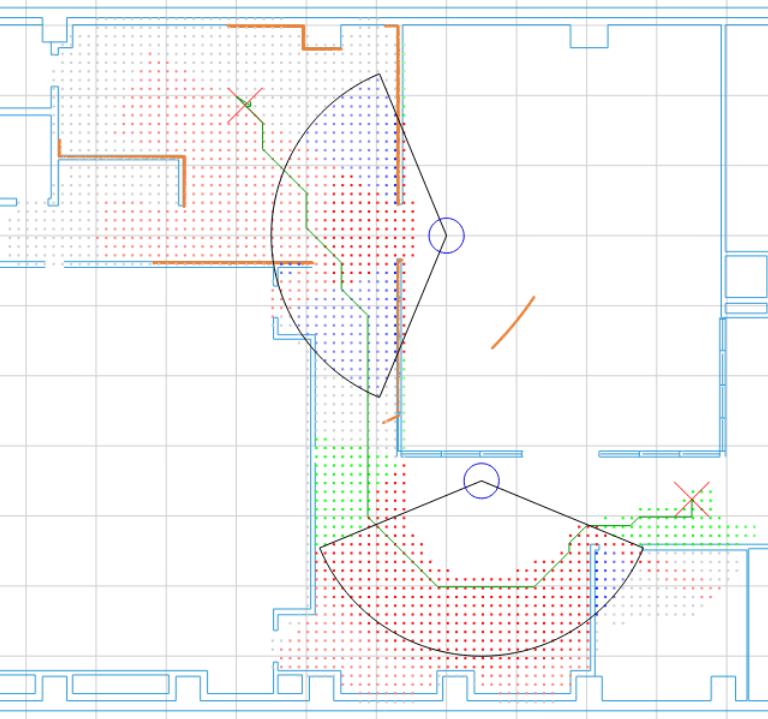

Each car was equipped with a 2D LiDAR, a sensor that can return the distance to objects relative to the car. With this input, we implemented obstacle avoidance and a greedy local planner, basically programming the car to go forwards without crashing for as long as possible. Then we added a particle filter for localization, which uses the best guess of the car's pose from the wheel rotations along with how well the LiDAR scans match a known map to estimate its actual pose:

The next task was to add Simultaneous Localization and Mapping (SLAM), a hot topic in all self-driving applications. After this, we programmed a global planner which creates a path from point A to B and follows it using the local planner with a dynamic goal. My team chose to also add human social awareness in the end, resulting in paths that are safe and trustworthy around people. (Reference paper)

Overall, this class was a great step outside of my comfort zone, focusing on mobile robots rather than manipulators. All of our C++ code can be found on this forked repository.